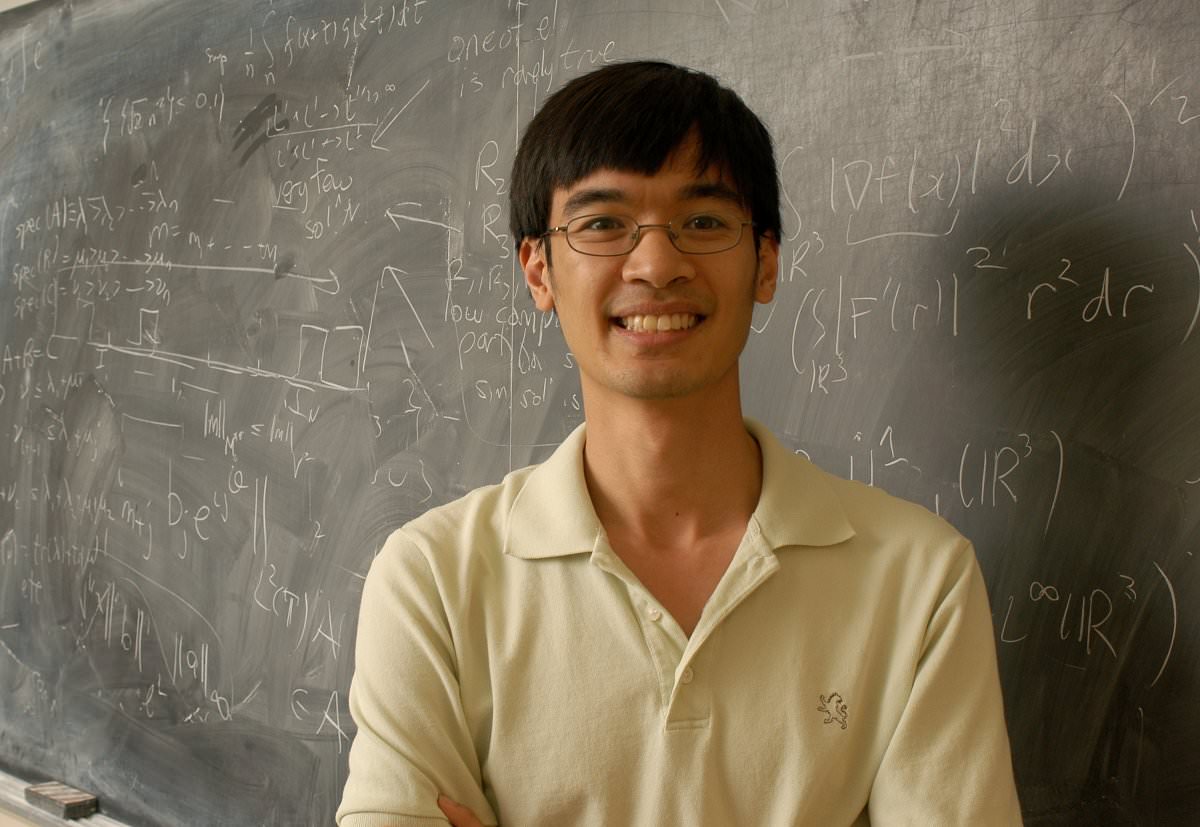

The worst thing I can think of that can realistically happen is this leading to something like the Einstein-Szilard letter.Convincing me that this is a net negative on expectation.If you are ever interested you can start by reading the pages linked in EA Cambridge’s AGI Safety Fundamentals course or the Alignment Forum. It isn’t simply a theoretical concern, if Demis’ predictions of 10 to 20 years to AGI are anywhere near correct, it will deeply affect you and your family (and everyone else). I know that to you it isn't the most interesting problem to think about but it really, actually is a very very important and urgent completely open problem. Every time I've asked about trying anything like this, all the advisors claim that you cannot pay people at the Terry Tao level to work on problems that don't interest them.”, so he didn’t even send you an email. And Eliezer Yudkowsky of MIRI ( said in an online comment “We'd absolutely pay him if he showed up and said he wanted to work on the problem. when we no longer have time) he would want to assemble a team with you on it but, I quote, “I didn’t quite tell him the full plan of that”. Demis Hassabis said on a podcast that when we near AGI (i.e. It is not once but twice that I have heard leaders of AI research orgs say they want you to work on AI alignment. Title: Have you considered working on AI alignment? So you (whoever is reading this) have until June 23rd to convince me that I shouldn’t send this to his address:Įdit: I’ve been informed that someone with much better chances of success will be trying to contact him soon, so the priority now is to convince Demis Hassabis (see further below) and to find other similarly talented people. Otherwise, especially for people that aren’t merely pessimistic but measure success probability in log-odds, sending that email is a low cost action that we should definitely try. So if anyone has contacted him or people like him (instead of regular college professors), I’d like to know how that went. We have already extensively verified that it doesn't particularly work for eg university professors. Every time I've asked about trying anything like this, all the advisors claim that you cannot pay people at the Terry Tao level to work on problems that don't interest them. We'd absolutely pay him if he showed up and said he wanted to work on the problem. Of special interest is this comment by Eliezer about Tao:

Comments arguing for Terence Tao specificallyīut I’m not aware of anyone that has actually even tried to do something like this.Short discussion on MIRI’s Facebook page.Greg Coulbourn’s “Mega-money for mega-smart people to solve AGI Alignment”.Have You Tried Hiring People?, a LW post.This has been discussed several times in the past, see:

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed